Home

Featured Writing

The Award Tour is not the tour after you win the award; it's the entire journey that gets you there and everything that takes place after.

The most important aspect of goal-setting is visualizing the person I will be after achieving the goal.

My bike accident on September 13th forced me to rethink the way I approach life and routines.

Featured Projects

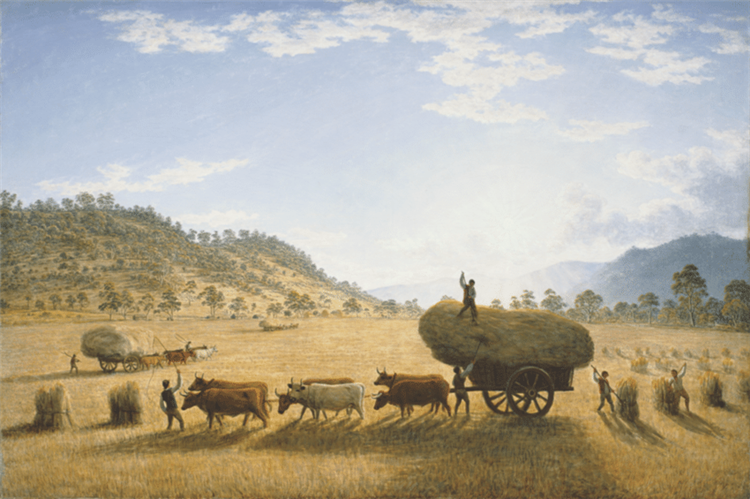

This table contains over 1700 unique names found in the Trans-Atlantic Slave Trade Database and their popularity in the United States over time as stated by the Social Security Administration. Each row containts a bar chart of the total babies born in a given year with that name. The table is searchable and filterable. The motivation for this project was a proof of concept using Sparklines. The bar charts within the table provide an interesting way to consume the data, but come with challenges. Without axes, it’s hard to know which year a specific bar corresponds to. Despite the limitations of the chart, I still find it refreshing to consume.

This is a map of alchohol distilleries in the United States with data provided from the U.S. Department of The Treasury. The underlying map color (Polygons) represents the county median incomes provided by the U.S. Census. Each dot represents a distillery.